Research Projects

With the ever-growing amount of data produced by humans as well as IoT devices and smart environments, there is a strong need for tools and natural user interfaces to explore and analyse these large datasets forming part of the Big Data era. There has been a lot of research on how to efficiently batch process large datasets and create some static reports for the analysis of the underlying dataset, but recently there is an increasing interest in real-time processing and query adaptation in Big Data environments, enabling so-called human-in-the-loop data exploration.

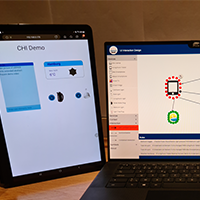

OpenHPS is an open source hybrid positioning system. The framework allows developers to create a process network with graph topology for computing the position of a person or asset. We provide modules that provide positioning methods (e.g. Wi-Fi, Bluetooth or visual positioning) or algorithms. These methods and algorithms are represented as nodes in the processing network.

Nowadays, scholars use various ICT tools as part of their scholarly workflows. Individual scholarly activities that might be supported by these ICT tools include the finding, storing and analysing of information as well as the writing sharing and publishing of research results. However, despite the widespread use of ICT tools in the research process (more than 600 ICT tools have been identified), a scholar’s information frequently gets fragmented across these different tools.

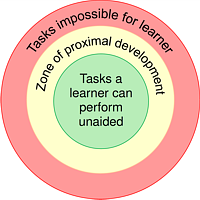

A major aim of our research is to make programming education accessible to everyone, especially people from underrepresented or underprivileged groups. That means thinking about ways we teach CS, thinking about how students experience CS, intrinsic or external motivations students may have for learning about computing, but also tools to help both educators and learners. Very important to note is that I aim to make sure that the tools are accessible to a wide audience and do not require complex LMS setups that might not be useable by small-scale organisations.

This research project aims to explore the integration of physical and digital information to enhance human-information interaction through next generation user interfaces. Realising physical carriers’ irreplaceable qualities and digital resources’ versatile abilities, we seek to bridge the gap between these two realms. We believe that instead of striving for the complete replacement of physical information carriers, a hybrid approach that combines digital and physical documents offers a more meaningful solution.

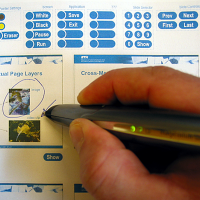

iPaper is a platform for interactive paper applications that has been realised as an extension of the iServer cross-media link server. The iPaper framework supports the rapid prototyping, development and deployment of interactive paper applications.

Existing presentation tools such as Microsoft's PowerPoint, Apple's Keynote or OpenOffice Impress represent a de facto standard for giving presentations. We question these existing slide-based presentation solutions due to some of their inherent limitations. Our new MindXpres presentation tool addresses these problems by introducing a radically new presentation format. In addition to a clear separation of content and visualisation, our HTML5 and JavaScript-based presentation solution offers advanced features such as the non-linear traversal of the presentation, hyperlinks, transclusion, semantic linking and navigation of information, multimodal input, dynamic interaction with the content, the import of external presentations and more.

The overall goal of this project is to develop a design toolkit for knowledge physicalisation and augmentation that will enable the design of solutions that work in synergy with a user’s knowledge processes and support them during knowledge tasks in educational as well as workplace settings. While there is a significant body of work in the fields of tangible user interfaces or data physicalisation, there is a gap in understanding the design space for solutions that support scribbling behaviour during daily activities in education and workplaces across the reality-virtuality continuum.

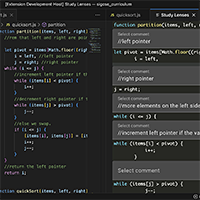

Explorotron is a Visual Studio Code (VS Code) extension that is designed to help students learn from arbitrary JavaScript code examples by providing different interactive views or so-called study lenses each focusing on different aspects of the code. The individual study lenses are based on computing education research and follow best practices such as the PRIMM methodology, a peel-away design or an environment closely resembling a professional programming environment to support skill transfer.

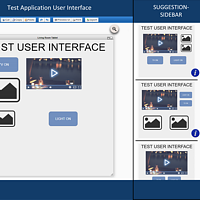

eSPACE is an innovative end-user authoring tool that enables individuals without programming knowledge to create their own smart applications through a user-friendly web interface. The tool offers a range of views to support the creation process, including the interaction view that utilises a visual pipeline metaphor to define interaction rules, and the rules view that serves as a more textual counterpart for defining rules using if-then statements.

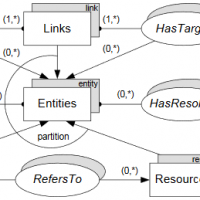

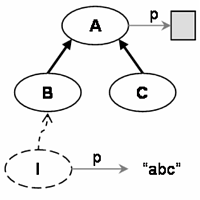

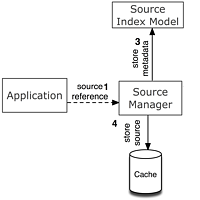

The iServer platform supports the integration of cross-media resources based on the resource-selector-link (RSL) model. iServer not only enables the definition of links between different types of digital media, but can also be used for integrating physical and digital resources.

Discovering smart devices in the physical world often requires indoor positioning systems. Bluetooth Low Energy (BLE) beacons are widely used for creating scalable, cost-effective positioning systems for indoor navigation, tracking, and location awareness. However, existing BLE specifications either lack sufficient information or rely on proprietary databases for beacon location. In response, we introduce SemBeacon—a novel BLE advertising solution and semantic ontology extension. SemBeacon is backward compatible with iBeacon, Eddystone, and AltBeacon. Through a prototype application, we demonstrate how SemBeacon enables real-time positioning systems that describe both location and environmental context. Unlike Eddystone-URL beacons, which broadcast web pages, SemBeacon focuses on broadcasting semantic data about the environment and available positioning systems using linked data.

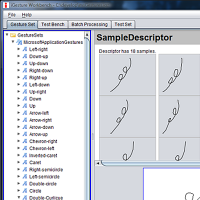

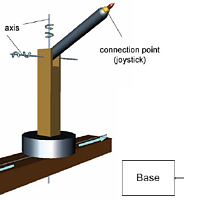

iGesture is an open source gesture recognition framework supporting application developers who would like to add new gesture recognition functionality to their application as well as designers of new gesture recognition algorithms.

The PaperPoint application is a simple but very effective tool for giving PowerPoint presentations. The slide handouts are printed on Anoto paper together with some additional paper buttons for controlling the PowerPoint presentation. A digital pen is used to remotely control the PowerPoint presentation over wireless Bluetooth technology.

Initiative to improve teaching aids (e.g. interactive paper) for rural areas of India in collaboration with the bj institute.

With the rise of different electronic devices such as smartphones, tablets and smartwatches, and the emergence of "smart things" forming part of the Internet of Things (IoT), user interfaces (UIs) have to be adapted in order to cope with the different input and output methods as well as device characteristics. In addition to the challenges dealing with these rapidly changing technologies, user interface designers struggle to adapt their UIs to evolving user needs and preferences, resulting in bad user experience.

The ArtVis project investigates advanced visualisation techniques in combination with a tangible user interface to explore a large source of information (Web Gallery of Art) about European painters and sculptors from the 11th to the mid-19th century. Specific graphical and tangible controls allow the user to explore the vast amount of artworks based on different dimensions (faceted browsing) such as the name of the painter, the museum where an artwork is located, the type of art or a specific period of time.The name ArtVis reflects the fact that we bring together artworks and the field of Information Visualisation (InfoVis) in order to achieve a playful and highly explorative user experience in order to get a broader understanding of the collection of artworks.

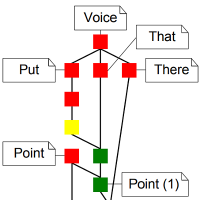

Mudra is a unified multimodal interaction framework supporting the integrated processing of low-level data streams as well as high-level semantic inferences. Our solution is based on a central fact base in combination with a declarative rule-based language to derive new facts at different abstraction levels. The architecture of the Mudra framework consists of three layers: At the infrastructure level, we support the incorporation of any arbitrary input modalities, including skeleton tracking via Microsoft’s Xbox Kinect, multi-touch via TUIO and Midas, voice recognition via CMU Sphinx and accelerometer data via SunSPOTs. In the core layer a very efficient inference engine (CLIPS) was substantially extended for the continuous processing of events. The application layer provides flexible handlers for end-user applications or fission frameworks, with the possibility to feed application-level entities back to the core layer.

The main goal of the Midas framework is to provide developers adequate software engineering abstractions to close the gap between the evolution in the multi-touch technology and software detection mechanisms. We advocate the use of a rule language which allows programmers to express gestures in a declarative way. Complex gestures which are extremely hard to be implemented in traditional approaches can be expressed in one or multiple rules which are easy to understand.

The number of youngsters (from 18 to 24 years old) who leave school without having obtained an upper-secondary education degree and who do not follow any type of education is much higher within the Brussels Capital Region than the Belgian average. Furthermore, in comparison to the Flemish and Walloon Regions the numbers for Brussels also prove comparatively elevated. In 2014, the school dropout rate for the Brussels Capital Region was 14,4%, while 7% for Flanders and 12,9% for Wallonia.

We present a solution that uses explicit gestures and implicit dance moves to control the visual augmentation of a live music performance. We further illustrate how our framework overcomes limitations of existing gesture classification systems by providing a precise recognition solution based on a single gesture sample in combination with expert knowledge. The presented approach enables more dynamic and spontaneous performances and|in combination with indirect augmented reality leads to a more intense interaction between artist and audience.

The MobiCraNT project aims at the development of software engineering principles and patterns for the development of mobile cross-media applications that operate in a heterogenous distributed setting and interact using cross-media technology.

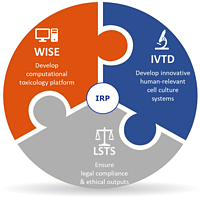

Currently, there are no validated animal-free replacement methods to assess repeated dose toxicity. This poses serious problems for the development of new chemical compounds across a variety of sectors, in particular cosmetics, where animal use is now fully banned in the EU. Advancing safety assessment of chemicals without the use of animal testing is thus urgently required to ensure a level of safety that is at least equivalent to that obtained through animal testing.

In this project, WISE studied the problems related to the evolution of ontologies. As is the case with everything in the world that surrounds us, ontologies are not indifferent to changes. Ontologies evolve as a consequence of changes of domains they describe as well as changes in business and user requirements. Because ontologies are intended to be used and extended by other ontologies, and because they are deployed in a highly decentralized environment as the Web, the problem of ontology evolution is a far from trivial problem. The fact that ontologies depend on other ontologies means that the consequences of changes don’t remain local to the ontology itself, but affect depending ontologies as well. Furthermore, the decentralized nature of the Web makes it impossible to simply propagate changes to depending artifacts.

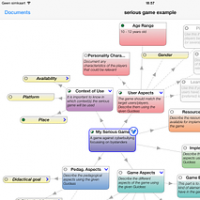

A first purpose of this project is to investigate the cognitive processing involved in educational games and its impact on learning. The second purpose is to investigate how we can influence these cognitive processes by using adaptive techniques. Among others, we investigate the impact of the personality, the learning style, as well as the motivational aspects of learning by manipulating different aspects of the educational game.

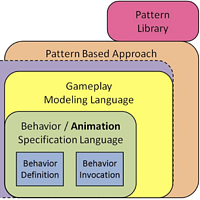

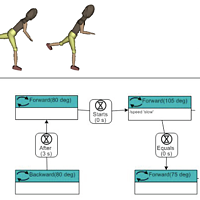

The major goal of this project is to develop a generic Design Pattern based methodology for specifying behaviors at a conceptual level for computer games and other interactive media applications such as virtual museums, virtual learning platforms.

The main purpose of this project is to introduce conceptual modeling concepts to specify complex 3D objects in Virtual World and to rigorously define the semantics of these new modeling concepts using logic.

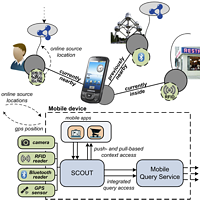

SCOUT is a mobile application development framework, which supports the development of mobile applications that are aware of the user's context (e.g., profile, device characteristics, ..), his current (physical) environment, and the people, objects and places in it. By exploiting this knowledge on the user and his environment, such applications are able to provide personalized information and services to the user. SCOUT supports different sensing technologies to become aware of the user's surrounding environment (and the physical entities in it), and is primarily based on Web technologies for communication and data acquisition, and Semantic Web technologies for integrating and enriching the knowledge present in the decentralized data sources.

This project investigates distribution in querying on ontology-based data stored in a distributed collection of Semantic Web systems. With the data not being stored in a single repository, but being offered through a network of connected repositories, we come one step closer to a true Web-like platform for information sharing. Obviously, the querying and retrieval of data from that network needs to take the aspect of distribution into account without losing the benefits of the formal grounding for dealing with ontology-based information.

In the context of an European epidemiological study EURALOC, WISE developed a mobile app to predict, track, calculate and simulate the eye lens radiation dose received by an interventional cardiologist during procedures.

The app, called mEyeDose, is free and available on http://www.meyedose.eu/

The flyer can be downloaded here

The goal of project is to research in a scientifically founded manner how ICT-based interventions (or intervention parts) can be used in the battle against (cyber) bullying. The emphasis is on the mapping of the problem of (cyber) bullying as well as on the developing and testing of dynamic, state-of-the art ICT applications in order to tackle the problem. In order to give this project shape, the so-called “intervention Mapping Approach” is followed.

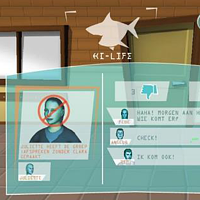

Friendly ATTAC will study and develop an innovative ICT tool to help youngsters deal with cyberbullying issues. By means of highly personalized virtual experience scenarios, providing players with immediate feedback in a safe computer-mediated environment, we will attempt to modify relevant determinants of behaviours related to the roles of bullies, bystanders and victims.

GRAPPLE is a EU FP7 STREP project aiming at delivering learners with a technology-enhanced learning (TEL) environment that guides them through a life-long learning experience, automatically adapting to personal preferences, prior knowledge, skills and competences, learning goals and the personal or social context in which the learning takes place.

As a project partner, WISE is focusing on adaptivity for new learning media, specifically Virtual Reality, to enhance the personalized learning experience of e-learners.

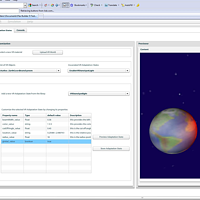

GuideaMaps is a flexible tablet (iPad) app that supports the requirements elicitation phase for domain-specific software. It is usable by non-computing as well as computing people, as building domain-specific software requires the expertise of people with very different background. The app guides the users through the requirement elicitation by providing a (customizable) list of issues to consider. The app provides explanations, indicates which issues are required and which are optional, provides options and alternatives, indicates the impacts of choices, and documents choices made. The tool can be used in meetings or individually.

In this project we want to combine the research activities done in the context of software localization with those done in the context of website design. We believe that research in localization and internationalization of websites (and software in general) may benefit from a cooperation between researchers from the software localization community (which are mainly linguists or computer linguists) and researchers from the web design community (mainly computer scientists). Such a corporation may give new insights and may result in new techniques in both domains.

QuTi is a research project performed in the context of iBrussels, a research project on Wi-Fi Architecture financed by the Brussels Capital-Region and supervised by Centrum voor Informatica voor het Brussels Gewest (CIBG). In QuTi, WISE developed a framework that provides real-time information on queue-sensitive events. QuTi relies on existing detection technologies to monitor crowds and measure bustle, and delivers this time-sensitive information real-time through a Web site and a mobile application. Based on this real-time information, visitors can decide when is the best time to visit.

The framework has been applied to measure the traffic in the student restaurant of the VUB.

For years, the logic course in the first year of our Bachelor in Computer Science was a serious hurdle for many students. Most of the students computer science perceive the formal and abstract mechanisms of logic as difficult and awkward to deal with. We have seen a lot of procrastination with regard to studying this subject.

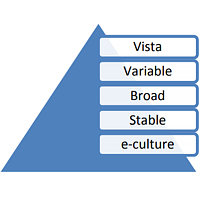

VariBru is a research project initiated in the framework of the Brussels Impulse Programme for ICT. It is supported by the Brussels Capital Region. The VariBru project addresses the strategic problems of software-intensive product builders. Its innovation goal is to perform research in four industrially relevant domains of software variability: software variability management, software product configurability, variability in live contexts, and variability in intention-aware products.

As a project partner, WISE is investigating the focussing on modeling the software variability. Read more on http://www.featureassembly.com

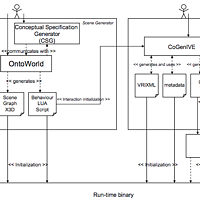

The proposed research will result in a set of generic concepts and techniques usable for conceptual modelling and realizing virtual environments. The ultimate goal is to facilitate and shorten the development process of virtual environments by means of conceptual specifications.

In the project, WISE will develop a set of generic concepts usable for the conceptual modeling of the static part of a virtual environment, as well as for specifying the behaviors required in the virtual environment. WISE will also investigated how the actual virtual environments can be realized by means of such high-level conceptual specifications (i.e. code generation). Also tool support will be developed.

The VR-WISE research started some years after WISE was founded. The focus of this research was on the conceptual modeling of Virtual Reality Environments.

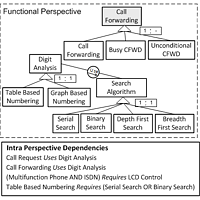

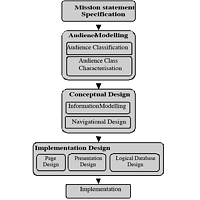

WISE developed as one of the first few a Web site design method, called WSDM (1998). This method followed a completely new approach in designing Web applications, called the ‘audience driven’ approach, which is nowadays followed by many Web design methods. The original WSDM method, and its associated modeling formalisms has evolved over the years to a complete ‘semantic’ web design method, both applying and deploying semantic web technology, and supporting the generation of semantic annotations. Different master student and PhD students have considered and still consider a variety of additional design issues, such as adaptivity, localization, accessibility, and social tagging, while others have focused on code generation and tool support.